FILM-MATCH SPECTRA

This is how FilmMatch Spectra has been built, let’s get a little technical!

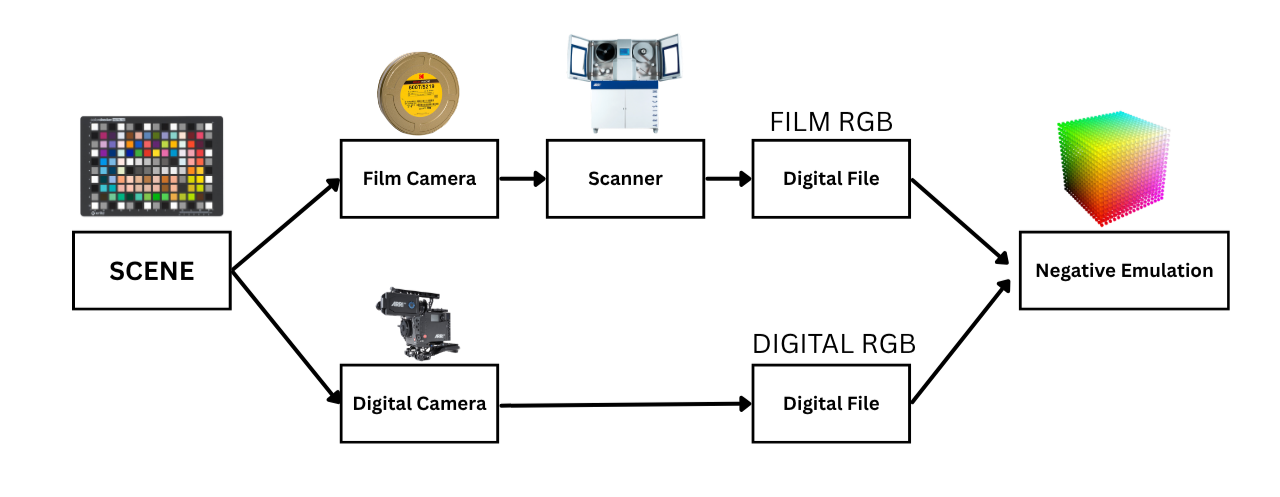

Let’s first take a look at a traditional film emulation workflow

As you can see in the diagram above, a classic film emulation workflow consists in pointing a film camera and a digital camera to the same scene, which could be a chart or a more complex scene, and shoot the scene all across the exposure range. Once that is done, the negative can be scanned (if we are going for a negative emulation or printed if we want to build a print emulation), in order to have a digital file for the film stock. The emulation is then built by comparing the RGB values of the digital camera and the RGB values of the scanned negative and through various techniques (manual matching, or scattered data interpolation) we can match one to the other. This workflow works very well, and if the data collection is done right, can yield very accurate results. The drawbacks are that we treat the film system as a sort of a black box and tiniest mistakes in the data capture part of the process can yield to massive headaches and inaccuracies further down the line.

Let me introduce you to a new way of thinking about film emulation!

Spectral Simulation

What if we do the same thing but in a simulation? Let me explain

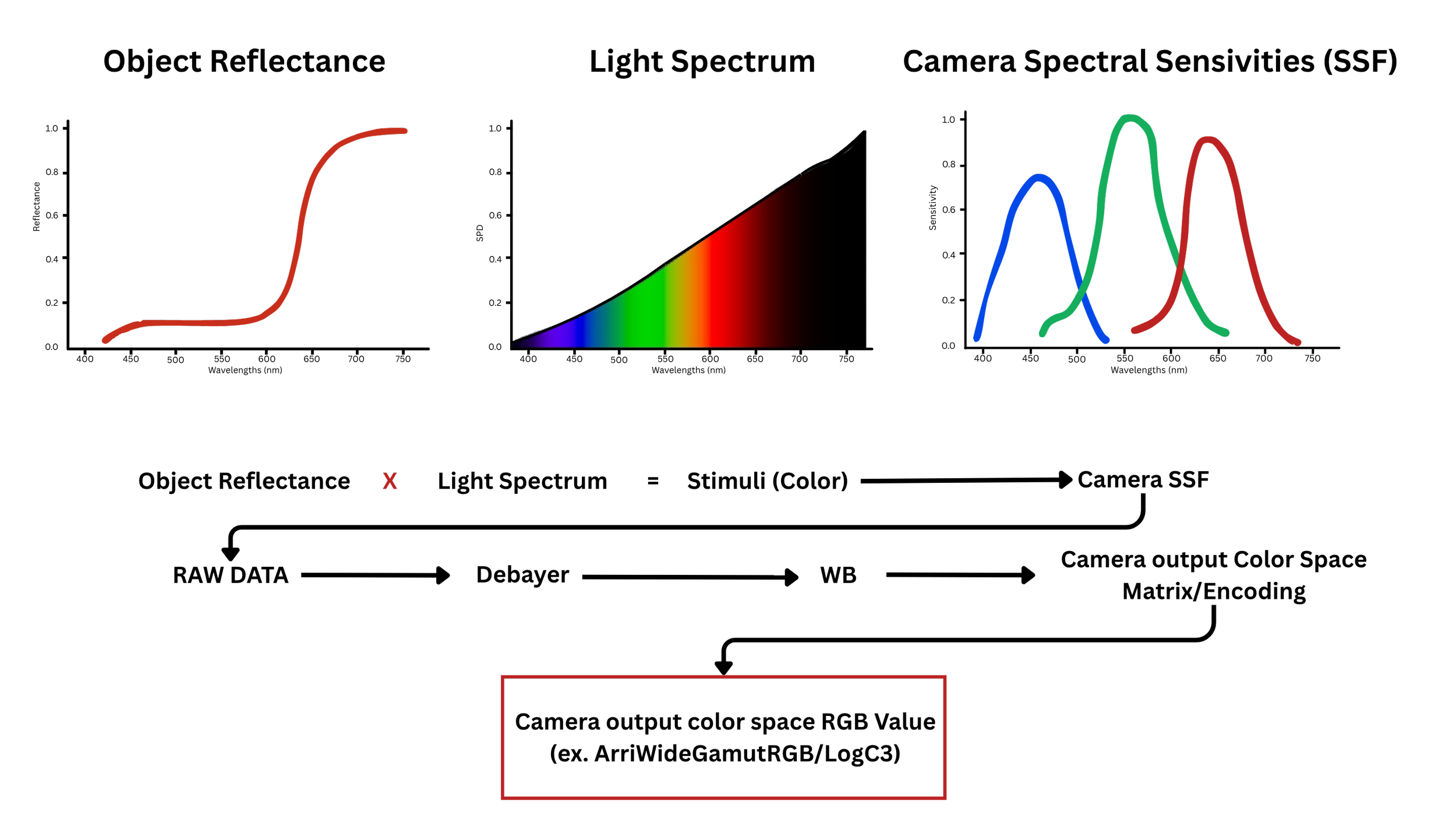

If we know how a given camera or film stock responds to light at all wavelengths across the visible spectrum, we can accurately simulate how any color recorded by that camera or film stock will be rendered. For a digital camera this is achieved by measuring/obtaining the Spectral Sensitivity Functions (SSF). Let’s see how it works for a digital camera first.

The journey starts with the reflectance of an object, and the power distribution of the light source illuminating it. Their product defines the stimuli (normally referred to as color). The stimuli is then observed by the camera with its Spectral Sensitivities (SSF) which define what the sensor sees and captures when a stimuli hits it. The Raw Data from the sensor is then debayered, WB balanced, and then, the output color space matrix and gamma encoding are applied to go from the linear native space of the sensor to a defined camera output color space (for example: ArriWideGamutRGB/LogC3, SGamut3.cine/Slog3). If we know the Spectral Sensitivities of a camera we can literally know what RGB values that camera would produce for any stimuli (color) it will encounter.

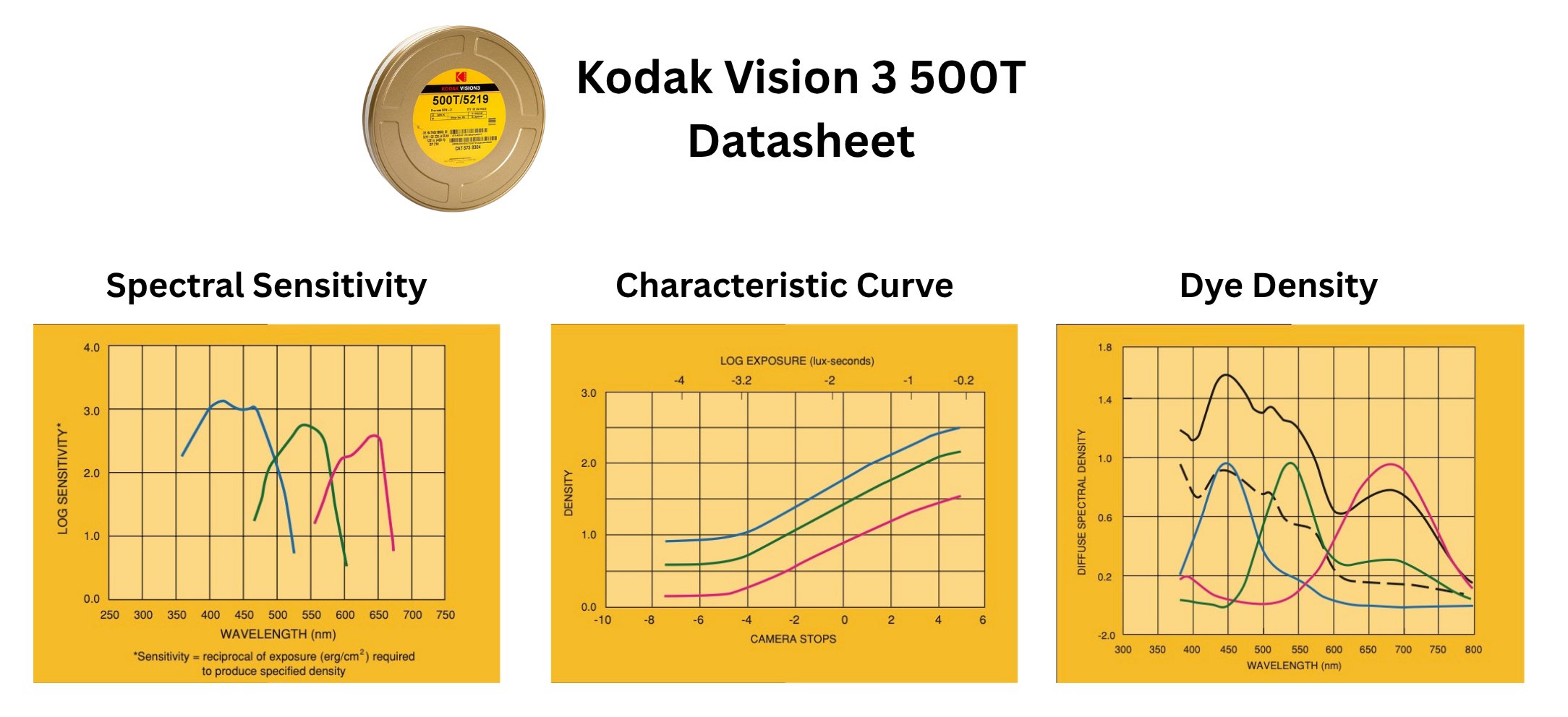

Let’s now take a look at how this works for a film system.

Once the stimuli hits the negative, it exposes it to light. There, the negative observes/reacts to this light according to its Spectral Sensitivities and stores that reaction in a latent image, waiting to be developed. During development, physical dyes are formed, and the Characteristic Curve steps in to dictate exactly how much of those dyes are created. At this point once, the dyes are formed and fixed with the development, the negative can be though of as filter, the transmission of which is dictated by the density of the Cyan, Magenta and Yellow Dyes.

In the printing process, a light source shines through the negative dyes to expose the Print (Positive). Just like the negative did, the print film observes and reacts to this surviving light with its own unique Spectral Sensitivities, storing that reaction in a brand-new latent image. During the print development, physical Cyan, Magenta and Yellow Dyes are formed, and the Print Characteristic Curve steps in to dictate exactly how much of those specific dyes are created. In the case of motion picture film, that print will behave again like a filter for the collimated light of a projector.

The nice thing is that Kodak itself publishes the specs of each film stock in the datasheets describing the behavior of the film stock across the pipeline.

Ok, but how does this relates to film emulation specifically? And how do we craft a pipeline knowing all of this.

Well, given that all of these components of the 2 pipelines we talked about (digital and film) are already defined mathematically we can do something along these lines:

We can have the spectral reflectance of an object, the power distribution of the light hitting that object and then we can observe that stimuli through both pipelines. How does the digital pipeline renders it, how does the film pipeline renders it? Once we have done this procedure for many different stimuli we end up with 2 datasets, one’s the digital camera response to those stimuli, one’s the film stock’s.

From there we can use whatever matching tool/technique we wish to use (I use my own ColourMatch algorithm) to match the digital camera response to the film stock response just like we would have done if we had measured data. The advantage of this kind of workflow is that we are not limited in any way by the precision and amount of stimuli we feed the 2 pipelines, because we can literally create an infinite amount of stimuli in the simulation and also fine tune the datasets to the types of stimuli we care about the most.

Daniele Siragusano, (color scientist at FilmLight) used a similar spectral based approach when he created the look for Tribes of Europa. You can listen to the interview here: https://vimeo.com/521822858?fl=pl&fe=sh at minute 26.25.

I still think measuring real world charts going through the photochemical process has still its purpose and I’ll keep on doing that as well. With real world measured data you are not relying on Kodak measurements and data sheets and you can target a specific film stock with a specific development process at a specific lab. That being said, the real world measurements approach is more time consuming and it behaves as a black box as you can only see what’s the rendering at the end of the pipeline without having full control of all the individual components.

With the spectral simulation pipeline one can easily swap the light source and say: I created this emulation LUT using a Tungsten source, let me see how it changes when I swap the light source and use Daylight instead. Or: actually let’s try the specific LED the DP wants to shoot with. Or: let’s change the stimuli or even mix and match different film stocks data.

At the end of the day, both the real world measured data approach and the spectral simulation approach, are just 2 different ways to bring back the magic of film in the digital world.